5 Surprising Truths About Semantic Search That Go Beyond the Hype

If you’ve built a simple Retrieval-Augmented Generation (RAG) system, you know the feeling. You feed it a few PDFs, ask a question, and get a coherent, fact-based answer. It feels like you’ve cracked the code to Semantic Search. But this simple approach shatters the moment it meets reality. When faced with thousands of documents, messy formatting, and the relentless demands of a production environment, the demo that worked perfectly on your laptop becomes a high-latency, hallucination-prone nightmare.

This article reveals five surprising but critical truths for building Semantic Search systems that actually work in production. Pulled from the insights of system architects and recent industry data, these lessons go beyond the initial hype to deliver a clear blueprint for engineering robust, scalable, and genuinely intelligent search applications.

1. Your Off-the-Shelf Embedding Model Is Probably Dumber Than You Think

Using a general-purpose embedding model from a major provider like OpenAI or Google for a specific business use case can feel like a smart shortcut. The surprising truth is that it can produce search results that are significantly worse than traditional keyword search algorithms like BM25. These models are trained on broad internet data and lack the specific context to understand your company’s internal jargon, product names, or unique business logic.

As Marqo CEO Tom Hamer notes, the quality of your entire system hinges on this initial step:

“Vector similarity search is only as good as the vector embeddings are.”

The “secret sauce” for effective Semantic Search is fine-tuning the embedding model on your own domain-specific data. By training the model on datasets like user search histories or product purchase data, you teach it what’s important, such as incorporating business metrics like purchase frequency to teach the model that a frequently bought item is a more “popular” and relevant result for a general query. This process effectively teaches the model your business’s unique definition of relevance and user intent, moving it beyond the generic understanding of language it was originally trained on. This is so impactful because the model’s ability to understand your specific world is the foundation of your entire RAG system’s intelligence.

2. How You Split Your Documents Matters More Than You Imagine

The default approach in many RAG tutorials is fixed-size chunking—splitting documents into uniform blocks of a few hundred characters. For any complex document, this is a “terrible idea.” This naive method has no respect for the semantic meaning of the text, often splitting sentences, paragraphs, or even words right down the middle. This creates fragmented, context-poor chunks that confuse the LLM and lead to incomplete answers.

More sophisticated and effective chunking strategies treat your documents not as raw text, but as structured knowledge:

- Recursive Character Splitting: A smarter default that tries to split text along natural boundaries. It attempts to divide by paragraphs first, then sentences, and so on, helping to keep semantically related text together.

- Semantic Chunking: Instead of splitting by length, this method groups sentences by their meaning. It clusters semantically similar sentences into a single, cohesive chunk, ensuring the context remains tight and relevant.

- Content-Aware Chunking: This strategy uses the document’s own structure to guide the splitting process. For example, it splits Markdown files by headers, HTML by tags, or code files by functions and classes, creating incredibly meaningful chunks that respect the author’s original layout.

- Agentic Chunking: An emerging technique where an LLM agent analyzes the document to determine logical boundaries, acting like a human editor. The agent uses its understanding of the content to decide the most logical places to create chunk breaks.

This is a critical, counter-intuitive point. Effective chunking isn’t just about splitting text; it’s a sophisticated process of modeling the knowledge contained within your documents to make it structured, accessible, and useful for the LLM.

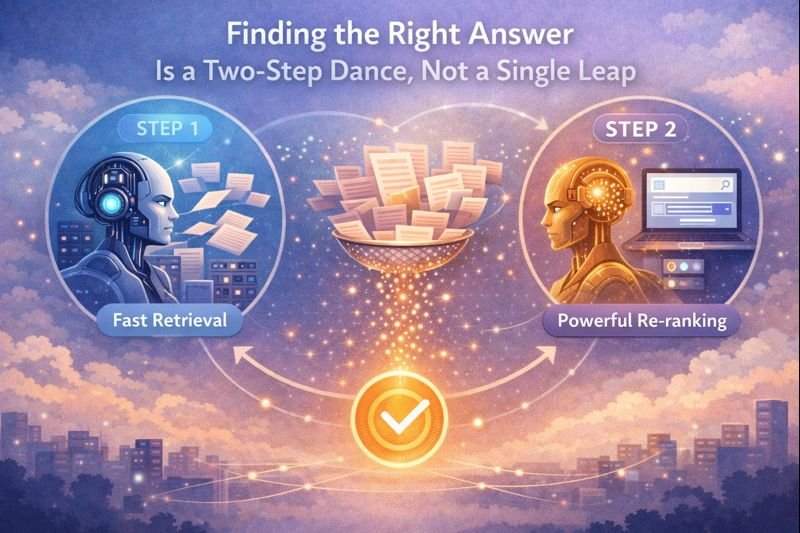

3. Finding the Right Answer Is a Two-Step Dance, Not a Single Leap

Relying on a single vector search to find the best answer across thousands or millions of documents is a recipe for failure. The fastest search algorithms aren’t always the most accurate, and the most accurate models are too slow to run on an entire database. For this reason, a two-stage retrieval process is non-negotiable for production-grade systems.

This architecture breaks the problem into two distinct steps:

- The Fast Retriever: First, you use a fast, approximate search index (like HNSW) to cast a wide net. This stage rapidly retrieves a large number of potentially relevant documents—for example, the top 50 or 100 candidates. This step is optimized for recall, ensuring the correct answer is almost certainly somewhere in the retrieved set. (This stage typically uses a bi-encoder model, which creates embeddings for the query and documents independently for speed).

- The Powerful Re-ranker: Second, you take this smaller set of candidates and use a more computationally expensive but far more accurate model to meticulously re-score each one against the user’s query. This pushes the absolute best results to the very top. This step is optimized for precision, ensuring the final context given to the LLM is of the highest possible relevance. (This uses a cross-encoder, which evaluates the query and each document together, allowing for a much deeper but slower analysis of relevance).

A valuable addition to this process is Hybrid Search, which combines vector search with traditional keyword search (like BM25). This ensures that specific product codes, acronyms, or names that vector search might miss are still reliably found. This two-stage architecture is the standard for production systems because it elegantly resolves the fundamental trade-off between latency and relevance, providing the sub-second response times users expect without sacrificing the deep semantic accuracy required for correct answers.

4. "Vector Search" Isn't the Goal—It's Just the Plumbing

The terms “vector search” and “semantic search” are often used interchangeably, but they represent two different layers of an application. Vector search is the underlying infrastructure—the plumbing that uses embeddings to find a list of mathematically similar items. It is a powerful but incomplete tool.

True semantic search requires an additional layer of ranking and personalization built on top of the vector search results. This is where business logic comes in. Consider a query like “revenue decline in Europe.” Vector search is what finds documents containing similar terms. The semantic layer is what understands the user’s intent and connects that query to sales reports, regional performance dashboards, and related customer feedback—even if those documents don’t use the exact word “decline.”

This semantic layer is only effective if the underlying ‘plumbing’—your vector embeddings—has already been fine-tuned to understand your specific domain, as discussed earlier. Without that foundation, even the most sophisticated ranking logic is working with flawed data. This distinction is critical for anyone building an AI application: the goal isn’t just to find a list of similar vectors, but to deliver a relevant, valuable, and contextually-aware answer that serves the user’s ultimate goal.

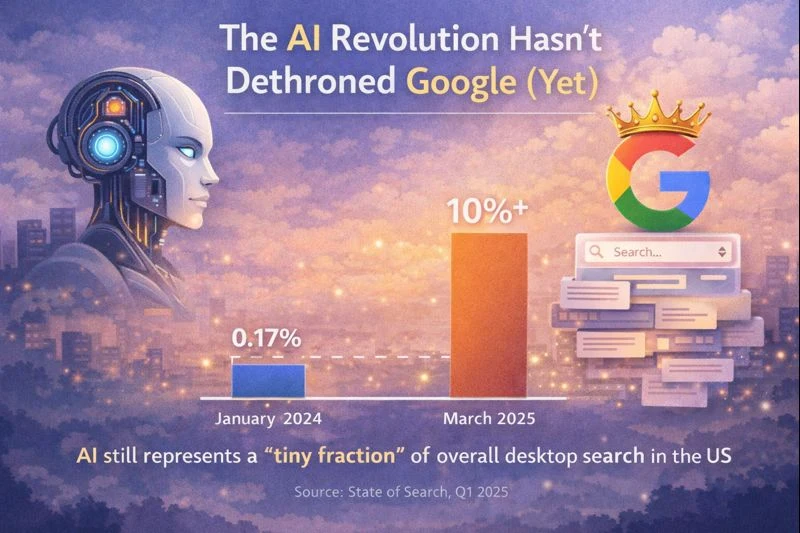

5. The AI Revolution Hasn't Dethroned Google (Yet)

Despite a media narrative suggesting a massive, immediate shift in user behavior, the AI revolution has yet to displace traditional search. According to the “State of Search Q1 2025” report, AI tools still represent a “tiny fraction” of overall web usage.

The data provides a clear picture of the current landscape. In the United States, from January 2024 to March 2025, the share of desktop events on AI platforms grew from just 0.17% to 0.55%. In that same period, traditional search still accounted for over 10% of all desktop events in March 2025.

As SparkToro Co-founder Rand Fishkin puts it:

“Yes, AI tools are popular, and yes, they’re growing. But despite the media hype, AI is still a tiny fraction of overall usage in the US, EU, and UK.”

Furthermore, the data shows that Google continues to overwhelmingly dominate the traditional search space. This doesn’t diminish the transformative potential of AI, but it does place it in a realistic context. New technology follows a familiar adoption curve, and it is essential to ground our understanding of the AI revolution in real-world data rather than just headlines.

Conclusion: Building for Reality

Building a simple RAG demo is an exciting first step, but deploying a robust, production-grade Semantic Search system is a serious engineering challenge that requires moving far beyond the basics. From fine-tuning your models to architecting a multi-stage retrieval pipeline, success depends on a deep understanding of the complex systems that turn a mountain of data into a single, correct answer.

As we build the next generation of intelligent applications, the question we must ask is not just if our AI can find an answer, but whether we’ve built the architecture required for it to find the right one.